Best machine learning algorithms you should know

Machine learning is a key technology tool businesses use to build tools that enhance their operations. To do that, they take advantage of machine learning algorithms that come in different shapes and sizes, servicing different purposes and working on different data sets. Choosing the right algorithm for the job is what makes machine learning and deep learning projects successful. That’s why being aware of all the different types of machine learning algorithms is so important – that’s how you get better results and build more advanced solutions.

Here’s an overview of the best machine learning algorithms you should know before starting your project.

What is meant by machine learning algorithms?

First things first, what is machine learning and how do algorithms fit into the picture? A machine learning (ML) algorithm is a process or set of procedures that allow a model to adapt to the data with a specific objective set as the goal.

An ML algorithm specifies how the data is transformed from the input to output, helping the model to learn the appropriate mapping from input to output. That model specifies the mapping functions and holds the parameters in place, while the machine learning algorithm updates the parameters to help the model match its goal.

What are the algorithms used in machine learning?

Algorithms can model problems in many different ways. The easiest way to differentiate between different ML algorithms is by comparing them by learning styles that they can adapt. Generally, machine learning algorithms can adapt to several learning styles that help to solve different problems.

Here are four learning styles in machine learning you need to know:

1 Supervised learning

In supervised learning, the input data serves as training data and comes with a known label or result – for example, the price at a time or spam/not-spam.

In this variant, the training process is critical for preparing a model that makes predictions and then is corrected when the predictions are wrong. The training process continues until the model achieves the appropriate level of accuracy. Classification and regression are examples of problems for this learning type.

2 Unsupervised learning

In unsupervised learning, input data isn’t labeled and doesn’t come with a known result. Data scientists prepare models by deducing the structures in the input data to extract general rules or reduce redundancy through mathematical processes. Unsupervised learning addresses problems such as association rule learning, dimensionality reduction, and clustering.

3 Semi-supervised learning

In this learning style, the input data is a mixture of labeled and unlabeled examples. The prediction problem is known, but the model needs to learn the structures for organizing data and making predictions on its own. This learning style is used to address problems such as regression and classification.

4 Reinforcement learning

One of three basic machine learning paradigms together with supervised learning and unsupervised learning, reinforcement learning (RL) is an area of machine learning that focuses on the ways in which software agents should take actions to maximize a specified notion of cumulative reward in a given environment.

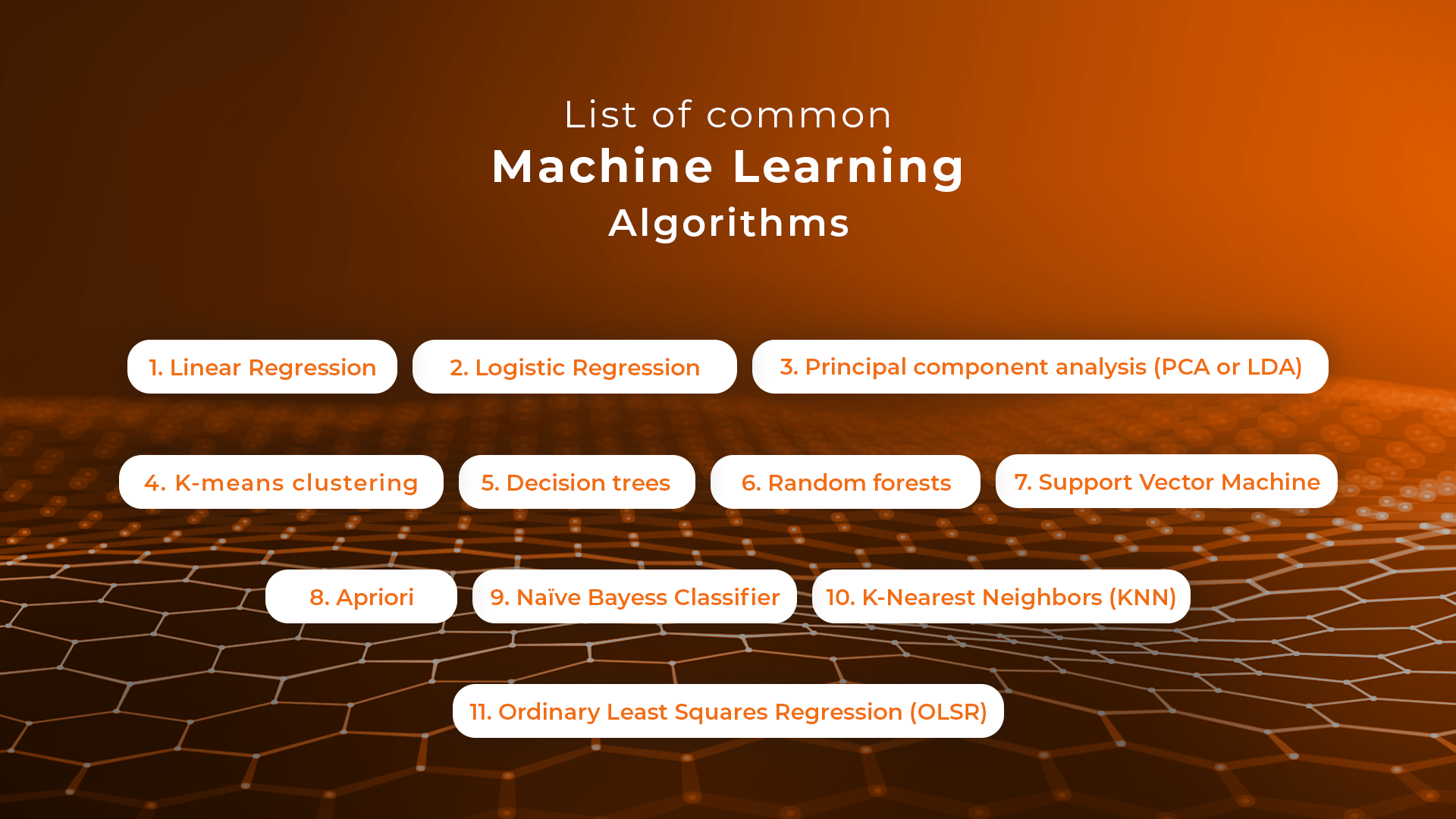

The best machine learning algorithms you should know

1 Linear Regression

Linear regression is an algorithm that correlates between two variables in the data set, examining the input and output sets to show a relationship between them. For example, the algorithm can show how changing one of the input variables affects the other variable. The relationship is represented by plotting a line on the graph.

Linear regression is one of the most popular algorithms in machine learning because it’s transparent and requires no tuning to work. Practical applications of this algorithm are risk assessment or sales forecasting solutions.

2 Logistic regression

Logistic regression is a type of constrained Linear Regression with a non-linearity application after you apply weights. Note that this algorithm is used for classification, not regression. The algorithm restricts the outputs close to +/- classes (and 1 and 0 in the case of sigmoid) and can be trained with Gradient Descent or L-BFGS.

Logistic regression is used in Natural Language Processing (NLP) applications, where it often appears under the name of Maximum Entropy Classifier.

3 Principal component analysis (PCA or LDA)

Principal component analysis is an unsupervised method that helps data scientists to understand better the global properties of a data set that consists of vectors. It analyzes the covariance matrix of data points to learn which dimensions/data points have high variance among themselves and low covariance with others. The algorithm helps data scientists to get data points with reduced dimensions.

4 K-means clustering

K- means clustering is a type of unsupervised clustering algorithm that sorts data sets through defined clusters. It offers results in the form of groups based on internal patterns.

For example, you can use a K-means algorithm for sorting web results for the word “cat,” and it will show all the results in the form of groups. The main advantage of this algorithm is its accuracy as it provides data groupings faster than other algorithms.

5 Decision trees

A decision tree is made of various branches that represent the outcome of many decisions. This algorithm collects and graphs data in multiple branches to predict response variables on the basis of past decisions. It comes in handy for mapping our decisions and presents results visually to communicate findings easily.

Decision trees work best for smaller data sets and relatively low-stake decisions – otherwise, the long-tail visuals can be hard to decipher. The key advantage of this algorithm is that it allows showing multiple outcomes and tests without having to involve data scientists – it’s easy to use.

6 Random forests

A random forest consists of a great number of individual decision trees where they all operate as an ensemble. An individual tree in the random forest generates a class prediction – the class which receives the highest number of votes becomes the model’s prediction. Having many relatively uncorrelated models (trees) operating as a committee easily outperforms individual constituent models.

The low correlation between these models is the strength of this approach because it allows producing ensemble predictions that are far more accurate than individual predictions. Note that decisions trees protect each other from individual errors. While some trees may generate false predictions, others will generate the right ones – as a group; they will be able to move in the right direction.

7 Support Vector Machine

Support Vector Machines (SVMs) are linear models similar to linear or logistic regression we’ve discussed earlier. However, there’s one difference – they have a different margin-based loss function, which can be optimized by using methods such as L-BFGS or SGD. SVMs internally analyze data sets into classes, which is helpful for future classifications.

The main idea behind SVM is separating data into classes and maximizing the margins of entering future data into classes. This type of algorithm works best for training data. However, it can also serve as a tool for processing nonlinear data. The financial sector makes use of Support Vector Machines thanks to its accuracy in classifying both current and future data sets.

8 Apriori

The Apriori algorithm is used a lot in market analysis. It’s based on the principle of Apriori and checks for positive and negative correlations between products after analyzing values in data sets.

For example, if two values often correlate in a data set, the algorithm will conclude that A will often lead to B, referring to the information in data sets. For example, if customers often buy product A and product B together, this relation will hold a high percentage and help companies like Google or Amazon to predict product searches and purchases.

9 Naive Bayes Classifier

This handy classification technique is based on Bayes’ Theorem, which assumes independence among predictors. The algorithm will assume that the presence of a specific feature in a class is not related to the presence of any other feature in the same class.

For example, a fruit may be considered a banana if it’s yellow, curved, and about 15 cm long. These features depend on each other, and on the existence of hooter features, they all independently contribute to the probability that this fruit is a banana. That’s why the algorithm bears the name “Naive.”

The algorithm offers a model that is easy to build and helpful in handling very large data sets. It can outperform the most sophisticated classification methods.

10 K-Nearest Neighbors (KNN)

This is one of the simplest algorithm types used in machine learning for classification and regression. KNN algorithms classify new data points on the basis of similarity measures, such as the distance function. They perform classification by using a majority vote of the data points’ neighbors. They then assign data to the class, which has the nearest neighbors. Together with increasing the number of nearest neighbors (the value of k), the accuracy may increase as well.

11 Ordinary Least Squares Regression (OLSR)

Ordinary Least Squares Regression (OLSR) is a generalized linear modeling technique data scientists use for estimating unknown parameters that are part of a linear regression model. OLSR describes the relationship between a dependent variable and one or more of its independent variables.

The algorithm is applied in diverse fields such as economics, finance, medicine, and social sciences. Companies use it in machine learning and predictive analytics to dynamically predict specific outcomes on the basis of variables that change dynamically.

We hope that this machine learning algorithms list helps you pick the right tools of the trade for your next machine learning project. If you’d like to learn more about Machine Learning, Data Science and Web Development, visit the Sunscrapers company blog.

Leave a Reply

Want to join the discussion?Feel free to contribute!