Deep Learning and Human Intelligence – Part 2 of 2

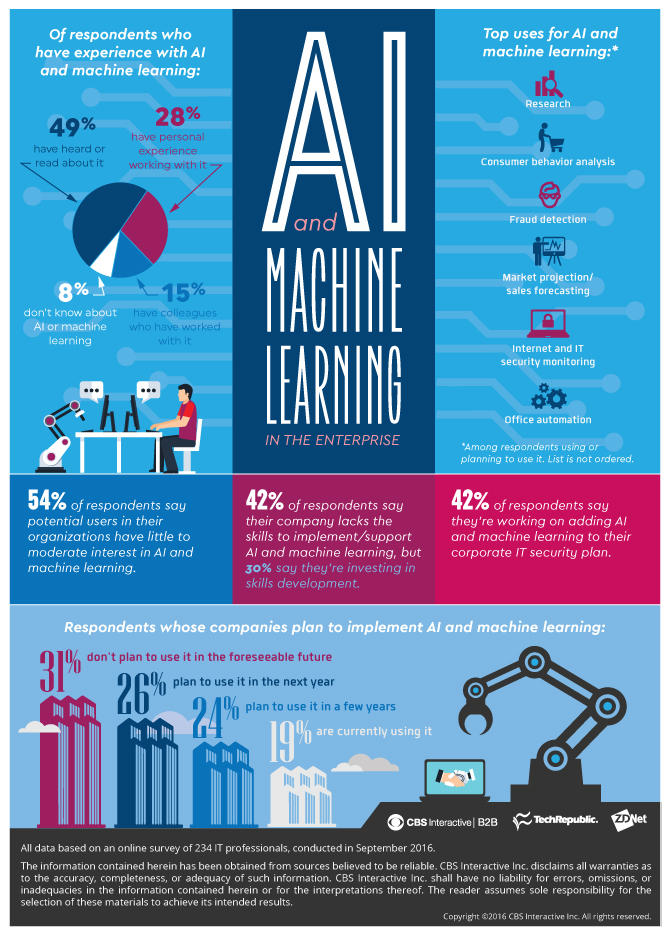

Data dependency is one of the biggest problem of Deep Learning Architectures. This difficulty lies not so much in the algorithm of Deep Learning as in the invisible structure of the data itself.

This is part 2 of 2 of the Article Series: Deep Learning and Human Intelligence.

We saw that the process of discovering numbers was accompanied with many aspects of what are today basic ideas of Machine Learning. But let us go back, a little before that time, when humankind did not fully discovered the concept of numbers. How would a person, at such a time, perceive quantity and the count of things? Some structures are easily recognizable as patterns of objects, that is numbers, like one sun, 2 trees, 3 children, 4 clouds and so on. Sets of objects are much simpler to count if all the objects of the set are present. In such a case it is sufficient to keep a one-to-one relationship between two different set, without the need for numbers, to make a judgement of crucial importance. One could consider the case of two enemies that go to war and wish to know which has a larger army. It is enough to associate a small stone to every enemy soldier and do the same with his one soldier to be able to decide, depending if stones are left or not, if his army is larger or not, without ever needing to know the exact number soldier of any of the armies.

But also does things can be counted which are not directly visible, and do not allow a direct association with direct observable objects that can be seen, like stones. Would a person, at that time, be able to observe easily the 4-th day since today, 5 weeks from now, when even the concept of week is already composite? Counting in this case is only possible if numbers are already developed through direct observation, and we use something similar with stones in our mind, i.e. a cognitive association, a number. Only then, one can think of the concept of measuring at equidistant moments in time at all. This is the reason why such measurements where still cutting edge in the time of Galileo Galilei as we seen before. It is easily to assume that even in the time when humans started to count, such indirect concepts of numbers were not considered to be in relation with numbers. This implies that many concepts with which we are today accustomed to regard as a number, were considered as belonging to different groups, cluster which are not related. Such an hypothesis is not even that much farfetched. Evidence for such a time are still present in some languages, like Japanese.

When we think of numbers, we associate them with the Indo-Arabic numbers, but in Japanese numbers have no decimal structure and counting depends not only on the length of the set (which is usually considered as the number), but also on the objects that make up the set. In Japanese one can speak of meeting roku people, visiting muttsu cities and seeing ropa birds, but referring each time to the same number: six. Additional, many regular or irregular suffixes make the whole system quite complicated. The division of counting into so many clusters seems unnecessarily complicated today, but can easily be understood from a point of view where language and numbers still form and, the numbers, were not yet a uniform concept. What one can learn from this is that the lack of a unifying concept implies an overly complex dependence on data, which is the present case for Deep Learning and AI in general.

Although Deep Learning was a breakthrough in the development of Artificial Intelligence, the task such algorithms can perform were and remained very narrow. It may identify birds or cancer cells, but it will miss the song of the birds or the cry of the patient with cancer. When Watson, a Deep Learning Architecture played the famous Jeopardy game against two former Champions and won, it still made several simple mistakes, like going for the same wrong answer like the player before. If it could listen to the answer of the candidate, it could delete the top answer it had, and gibe the second which was the right one. With other words, Deep Learning Architecture are not multi-tasking and it is for this reason that some experts in AI are calling them intelligent idiots.

Imagine spending time learning to play a game for years and years, and then, when mastering it and wish to play a different game, to be unable to use any of the past experience (of gaming) for the new one and needing to learn everything from scratch. That could be quite depressing and would make life needlessly difficult. This is the reason why people involved in developing Deep Learning worked from early on in the development of multi-tasking Deep Learning Architectures. On the way a different method of using Deep Learning was discovered: transfer learning. Because the time it takes for a Deep Learning Architecture to learn is very long, transfer learning uses already learned Deep Learning Architectures but for slightly different task. It is similar to the use of past experiences in solving new problems, but, the advantage of transfer learning is, it allow the using of past experiences (what it already learned) which reduces dramatically the amount of new data needed in performing a new task. Still, transfer learning is far away from permitting Deep Learning Architectures to perform any kind of task learning only from one master data set.

The management of a unique master data set which includes all the needed data to enable human accuracy for any human activity, is not enough. One needs another ingredient, the so called cost function which translates, in this case, to the human brain. There are all our experiences and knowledge. How long does it takes to collect sufficient of both to handle a normal human life? How much to achieve our highest potential? If not a lifetime, at least decades. And this also applies to our job: as a IT-developer, a Data Scientist or a professor at the university. We will always have to learn new things, how to use them, and how to expand the limits of our perceptions. The vast amount of information that science has gathered over the last four centuries makes it impossible for any human being to become an expert in all of it. Thus, one has to specialized. After the university, anyone has to choose o subject which is appealing enough to study it for decades. Here is the first sign of what can be understood as data segmentation and dependency. Such improvements can come in various forms: an algorithm in the IT, a theorem in mathematics, a new way to look at particles in physics or a new method to scan for diseases in biology, and so on. But there is a price to pay for specialization: the inability to be an expert in another field or subfield. (Subfields induces limitation!)

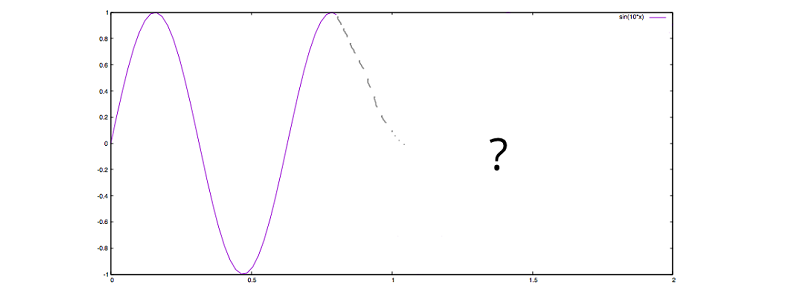

Lets take the Deep Learning algorithm itself as an example. For IT and much of everyday life, this is a real breakthrough, but it lacks any scientific, that is mathematical, foundation. There are no theorems which proofs that it will find (converge, to use a mathematical term) the global optimum. This does not appear to be of any great consequences if it can be so efficient, except that, when adding new data and let the algorithm learn the same architecture again, there is no guaranty what so ever that it will be as good as the old model, or even better. On the contrary, it is as real as the efficiency of the first model, that chances are that the new model with the new data will perform worse than the old model, and one has to invest again time in finding a better model, or even a different architecture. On the other hand, with a mathematical proof of convergence, it would be always possible to know in what condition such a convergence can be achieved. In other words, without deep knowledge in mathematics, any proof of a consistent Deep Learning Algorithm is impossible.

Such a situation is true for any other corssover between fields. A mathematical genius will make a lousy biologist, a great chemist will make a average economist, and a top economist will be a poor physicist. Knowledge is difficult to transfer and this is true also for everyday experiences. We learn from very small to play a game like football, but are unable to use the reflexes to play basketball, or tennis better than a normal beginner. We learn a new language after years and years of practice, but are unable to use the way we learned to learn faster other languages. We are trapped within the knowledge we developed from the data we used. It is for this reason why we cannot transfer the knowledge a mathematician has developed over decades to use it in biology or psychology, even if the knowledge is very advanced. Instead of thinking in knowledge, we thing in data. This is similar to the people which were unaware of numbers, and used sets (data) to work with them. Numbers could be very difficult to transmit from one person to another in former times.

Only think on all the great achievements that our society managed, like relativity, quantum mechanics, DNA, machines, etc. Such discoveries are the essences of human knowledge and took millennia to form and centuries to crystalize. Still, all this knowledge is captive in the data, in the special frame in which it was discovered and never had the chance to escape. Imagine the possibility to use thoughts/causalities like the one in relativity or quantum mechanics in biology, or history, or of the concept of DNA in mathematics or art. Imagine a music composition where the law of the notes allows a “tunnel effect” like in quantum mechanics, lower notes to warp the music scales like in relativity and/or to twist two music scale in a helix-like play. How many way to experience life awaits us. Or think of the knowledge hidden in mathematics which could help develop new medicine, but can not be transmitted.

Another example of the connection we experience between knowledge and the data through which we obtain it, are children. They are classical example when it come determine if one is up to explain to them something. Take as an explain something simple they can observe often, like lightning and thunder. Normal concepts like particles, charge, waves, propagation, medium of propagation, etc. become so complicated to expose by other means then the one through which they were discovered, that it becomes nearly impossible to explain to children how it works and that they do not need to fear it. Still, one can use analogy (i.e., transfer) to enable an explanation. Instead of particles, one can use balls, for charge one can use hardness, waves can be shown with strings by keeping one end fix and waving the other, propagation is the movement of the waves from one end of the string to the other end, medium of propagation is the difference between walking in air and water, etc. Although difficult, analogies can be found which enables us to explain even to children how complex phenomena works.

The same is true also for Deep Learning. The model, the knowledge it can extract from the data can be expressed only by such data alone. There is no transformation of the knowledge from one type of data to another. If such a transformation would exists, then Deep Learning would be able to learn any human task by only a set of data, a master data set. Without such a master data set and a corresponding cost function it will be nearly impossible to develop AI that mimics human behavior. With other words, without the realization how our mind works, and how to crystalize by this the data needed, AI will still need to look at all the activities separately. It also implies that AI are restricted to the human understanding of reality and themselves. Only with such a characteristic of a living being, thus also AI, can development of its on occur.

Alice Albrecht is a research engineer at

Alice Albrecht is a research engineer at